EEG source analysis in epileptology: an interview with Stefan Rampp

Dr. Stefan Rampp (MD) studied medicine at the University Erlangen–Nürnberg, Germany, where he graduated in 2004. He completed his doctoral thesis in 2006. From 2004 to 2014, he worked at the Epilepsy Center, Department of Neurology and at the Department of Neurosurgery (University Hospital Erlangen, Germany). In 2006, he also joined the Department of Neurosurgery, University Hospital Halle (Saale), Germany. In May 2016, he completed his habilitation thesis in experimental neurosurgery. He is currently the chair of the magnetoencephalography (MEG) lab of the University Hospital Erlangen, Germany.

Dr. Stefan Rampp (MD) studied medicine at the University Erlangen–Nürnberg, Germany, where he graduated in 2004. He completed his doctoral thesis in 2006. From 2004 to 2014, he worked at the Epilepsy Center, Department of Neurology and at the Department of Neurosurgery (University Hospital Erlangen, Germany). In 2006, he also joined the Department of Neurosurgery, University Hospital Halle (Saale), Germany. In May 2016, he completed his habilitation thesis in experimental neurosurgery. He is currently the chair of the magnetoencephalography (MEG) lab of the University Hospital Erlangen, Germany.

His research includes diverse topics, such as MEG, surface and invasive electroencephalography (EEG), as well as MRI analysis and postprocessing for epileptic focus localization, functional mapping and neurocognitive research. Further areas of interest are intraoperative monitoring, biosignal analysis and software development.

1. Could you introduce yourself and explain what began your interest into EEG and MEG research?

My name is Stefan Rampp, I’m an MD by trade and have been working with EEG and MEG for about 20 years. My interest in biosignal analysis probably started with my thesis, which looked at a classification approach of invasive EEG to investigate epileptic seizures. Although I didn’t use the term back then (and probably had never heard it), the approach was an early machine-learning application. Due to the collaboration with the Epilepsy Center (Erlangen, Germany), I quickly became involved with MEG. After finishing medical school, I was hired by the Epilepsy Center and spent some of my time working at the MEG lab. Both at the Epilepsy Center and the MEG lab, we already applied source localization techniques to get a better idea of where the patients’ epilepsies are coming from. I had always been interested in neuroscience, which certainly contributed to the decision to study medicine. The opportunity to work at the MEG lab deepened this even more.

2.What are the main challenges associated with EEG source analysis in the field of epilepsy?

“While I don’t think that the basic idea of source analysis is hard to understand, the way you have to think about it is rather different from what you normally do in an everyday clinical situation.”

I’d say that most current problems are practical ones. While I don’t think that the basic idea of source analysis is hard to understand, the way you have to think about it is rather different from what you normally do in an everyday clinical situation. It also has a somewhat ‘mathematical atmosphere’ to it, although of course the actual mathematical complexity is hidden behind the scenes of the software we use. It’s also not necessary to understand the depths of the underlying theory to use it (although having an idea helps).

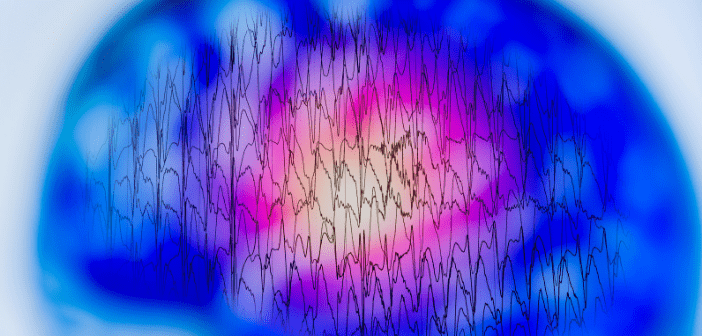

Then, there’s the EEG (or MEG) itself. Neurologists have quite a bit of experience with clinical interpretation, however clinical routine doesn’t leave much room for the intricacies and complex interactions of signal generation and for example, the influence of volume conduction and subtle activity hidden in noise. These aspects however significantly influence how the EEG appears and should be considered for interpretation (irrespective of whether we do this visually or using source analysis). Since such details are not obvious in clinical routine, the conventional clinical use of EEG usually accepts this as a principle limitation and is not aware of the fact that these can be solved. This means that source analysis solves a problem that classical EEG teaching is not aware of or just accepts as a limitation of the technique.

Of course, a practical issue is also the software, which necessarily is more complex than most of what epileptologists are used to in clinical routine. There has been quite a bit of improvement over the years but there will always be a limit beyond which simplified software becomes wrong. Standardized approaches and guidelines would certainly help and in fact, for example, the MEG and EEG communities have worked on and are actively developing practice guidelines.

3.How does BESA’s portfolio help to overcome these challenges?

BESA (Gräfelfing, Germany) provides a combination of user interface and tools for epileptic focus localization, which are easy enough to use. It certainly takes a little bit of training to become familiar with the user interface, but after that, using it is intuitive and fast. There are many small details that epileptologists will recognize from other clinical EEG software, which makes the learning curve a little less steep. What I think is the most helpful aspect is that there are standard pipelines available for both MRI preparation and the actual source localization. The latter is specifically implemented for evaluation of focal epileptic discharges and cover most cases. If it gets more complex, you still have to leave this suggested route, but then also you have a wide range of tools available to work in a more flexible way with the data.

There still is potential for improvement and I keep pointing out suggestions to the developers that may (or may not) be helpful. Generally, they are quite open about this and consider adding some of this to future releases. The standard analysis pipeline then of course also follows a certain principle or ‘school’, of EEG/MEG-analysis. That is, it is very much focused on the analysis of averaged discharges. Since good results have been published using it, there’s nothing wrong with this. However, there are other approaches, such as single spike analysis and supporters of those may have more difficulties applying them using BESA.

4.What interesting research has been published using the BESA workflow?

“While there are of course cases were the irritative zone is very different from the epileptogenic zone, I’d believe that some of this bad reputation is due to the inability of visual analysis to look at early, very subtle parts of a spike.”

I personally find two papers quite interesting: Maliiaa et al. (2016) and Scherg et al. (2002), as well as the follow-up in spirit Beniczky et al. (2016). Maliiaa et al. show the importance of analyzing early components of interictal spikes to avoid some of the propagation that will have already happened at the peak of a spike. The peak is usually what we look at in conventional EEG analysis, because this is of course the most obvious feature and has the highest amplitude. It is therefore no surprise that interictal patterns are usually considered to be less specific for the epileptogenic zone. While there are of course cases were the irritative zone is very different from the epileptogenic zone, I’d believe that some of this bad reputation is due to the inability of visual analysis to look at early, very subtle parts of a spike.

Scherg et al. describes the calculation and use of so-called source montages. Beniczky et al. then show application of this technique to rapidly evaluate MEG recordings. Source montages are basically rough projections of EEG or MEG data into source space, which yields a depiction of how the time course of activity would look like if there would be invasive electrodes distributed over the brain. Other software and studies call the general concept ‘virtual electrodes’ or ‘virtual channels’. While this of course does not reach the signal-to-noise ratio of ‘true’ invasive recordings, they offer several advantages for clinical practice. The main one is that they summarize the data from many channels (100–300 in MEG and high-density EEG) to a much lower number (20–50). While I would have to inspect MEG/HD-EEG using several subgroups of channels, that is, going through the data several times, source montages allow me to do this in one go – saving quite a bit of time. Then, since each source channel focuses on a specific region, artifacts and unrelated activity not originating from the specific area are dampened to some degree, which also makes spike detection much easier and could be potentially even more sensitive.

5.Can you tell us more about the importance of transforming EEG signals from the scalp back to the brain?

In addition to the practical value of projecting the EEG back to the brain using source montages, the importance is of course that you turn EEG from a regional electrophysiological modality into a quite accurate imaging method. Having access to localizations with accuracies to distinguish gyri (depending on data quality and number of channels of course) gives EEG a very different meaning than the more diffuse regional to sub-regional verbal description of visual interpretation. There is a large body of evidence out there (including prospective studies) that clearly show the clinical value and significant contribution to the spectrum of presurgical evaluation.

6.How do you hope EEG source analysis might affect the frontline of epilepsy care?

That depends on where the ‘frontline’ is… The true frontline is probably not running along the perimeter of epilepsy centers and epilepsy surgery, but rather in the practices that need to decide whether a patient can be treated with antiepileptic drugs or whether it would make sense to consider surgery. To be honest, I don’t really think that this is the place for source analysis, as the exact location of the epileptogenic zone is not very important at that stage. However, as soon as a patient steps through the entrance of an epilepsy center, even if only coming for a first conversation and an EEG, (a limited form of) source analysis could be used to decide early, if epilepsy surgery could be a therapy option and how extensive the presurgical evaluation needs to be. I’d also hope that source analysis would be used by more centers, especially as they already have the EEG data available. For some reason, the prevalent opinion is that you’d need a special form of EEG, such as HD-EEG (or MEG as ‘magnetic’ EEG) to perform source analysis. While more channels usually help, not many more channels are needed to get good information with around 30 electrodes (although the results will be coarser).

7.In your opinion, what do you see as the biggest area of growth for EEG analysis in epilepsy and where do you think EEG will have the largest impact in the coming years?

“The largest impact of EEG in the future could be outside of the field of epileptology. There are more clinical applications for example in functional mapping, traumatic brain injury and prognosis of dementias.”

I think there is quite a bit of potential in resting state analysis. There’s evidence that the visually obvious interictal discharges and ictal rhythms might just be the tip of the iceberg and that in fact there’s much more going on in the background activity. Approaches leveraging subtler changes, for example in oscillatory activity, might provide valuable localization information potentially even independent of spikes and seizures. On a practical side, some of these are automated procedures that require only minimal interaction by the physician. They may therefore provide objective information by the press of a button.

The largest impact of EEG in the future could be outside of the field of epileptology. There are more clinical applications for example in functional mapping, traumatic brain injury and prognosis of dementias. All these applications rely on improved analytical methods but certainly also on a better understanding of the physiological and pathophysiological mechanisms. I’m not saying that EEG will not have even more impact on epileptology. Its role is already significant; however, a more widespread adoption of available methods would have the most relevant impact on patients.

Disclaimer

The opinions expressed in this interview are those of the interviewee and do not necessarily reflect the views of Neuro Central or Future Science Group.